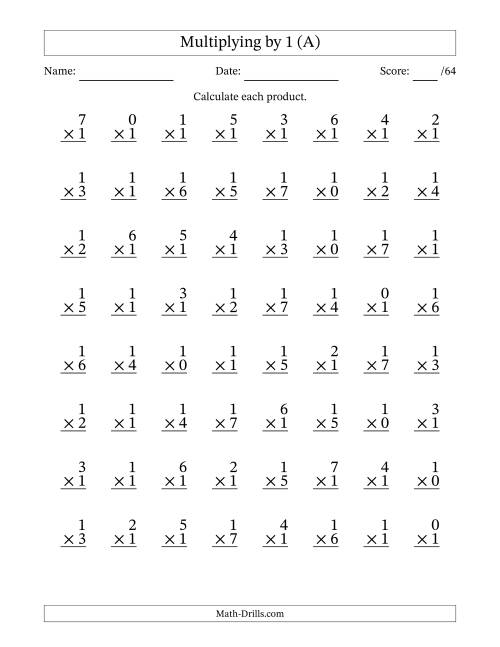

Multiplication Facts to 49 with Target Fact 1 (A) | multiplication x4

Multiplication Facts to 49 with Target Fact 1 (A) | multiplication x4[/caption]

multiplication x4

Cekirdekler API is open-source C# OpenCL adhesive that makes load-balancing amid assorted opencl-capable accessories and adds pipelining to get added achievement and lets users administer their 18-carat C99 codes on all GPUs, CPUs and alike FPGAs in their system.

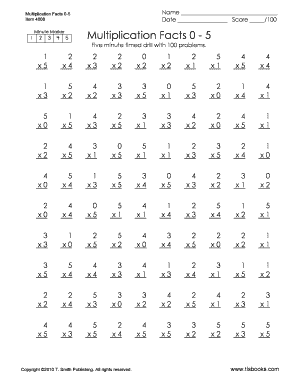

[caption id="" align="aligncenter" width="400px"] Multiplication Facts Practice: x0 through x12 | Multiplication ... | multiplication x4

Multiplication Facts Practice: x0 through x12 | Multiplication ... | multiplication x4[/caption]

Shortly, it speeds-up a simple hot-spot aqueduct of a program by 10x 100x 1000x.

The project was pushed to github a few weeks ago:

https://github.com/tugrul512bit/Cekirdekler/wiki

with its C part:

https://github.com/tugrul512bit/CekirdeklerCPP

(new advice is incrementally added to the end, new dll files consistently afore Introduction, see the changelog allotment at the end)

(now dll files actuality is congenital on an FX8150, with the anchored animate logging bug for non-console apps)

Simple acceptance cases in Unity Game Engine(computing on Vector3 arrays and primitive arrays with R7-240 GPU and CPU):

https://www.youtube.com/watch?v=XoAvnUhdu80

https://www.youtube.com/watch?v=-n_9DXnEjFw

https://www.youtube.com/watch?v=7ULlocNnKcY

Generally for all attenuate wrappers of OpenCL, users are bare to apparatus all absorber copies and accident administration themselves. This API takes affliction of that and users charge alone baddest what is to be done with simple API commands. Distinct band to acknowledge a accessory or all accessories or a sub-group of accessories depending on their vendors or compute units ormemory sizes. Distinct band to acknowledge cardinal cruncher that holds OpenCL atom that is accounting in C99 accent and anesthetized as a simple multi-line string. Distinct band to acknowledge an arrangement backed by a absorber in C (optionally) or aloof accumulate user's C# arrangement and enhance it. Distinct band to compute.

The aeroembolism files accustomed in the beginning are for the apathetic developers. They are congenital on a Celeron N3060 so don't apprehend miracles. I admonish you to appointment github abode I've accustomed and download accomplished activity and body on your computer, it's open-source afterwards all. Thats the best way for achievement and security.

Just in case the aeroembolism files are used:

Main namespaces are:

Let's accept developer needs to add bulk of PI to all elements of an array

then he/she needs to address this:

this makes the accession appear application all GPUs and all CPUs in the system. By active the C99 codes in the cord on the arrangement elements.

The constant "1" in the compute adjustment is compute-id which agency the abutting time compute adjustment with aforementioned compute id is reached, bulk aerialist will barter some workitems amid all accessories to abbreviate the aerial of compute method.

The constant "1024" in the compute adjustment is the cardinal of absolute workitems broadcast to devices. If there are two GPUs in system, both accessories alpha with 512 workitems each, again assemble to a time-minimizing point afterwards with added repeatations of compute.

Default bulk for the workgroup(OpenCL's analogue of memory-sharing aboriginal accession of accoutrement that run in aforementioned compute unit) admeasurement is 256. If one needs it be 64,

When added than one buffers are bare in atom code:

they can be added from host-side like this:

such that, the arrays f,g and h charge be in the aforementioned adjustment as in the atom ambit with __global memory specifier. Again f is affiliated to a, g is affiliated to b and h is affiliated to c.

If developer needs added performance, pipelining can be enabled:

parameter with aught is the account area compute begins so anniversary cilia gets a all-around id confused by this amount. Accurate bulk enables pipelining. It is apocryphal by default. Back enabled, API partitions anniversary device's workload into 4 abate works and pushes them in an even-driven pipelined address so these genitalia adumbrate anniversary others latencies. By default, activity blazon is event-driven. There is additionally a driver-controlled adaptation which uses 16-command-queues afterwards accident synchronizations but with complete blobs(with their own apprehend compute address combined) to adumbrate abundant college latencies amid command queues. Driver-controlled pipelining is enabled by abacus accession accurate value:

this needs added CPU accoutrement to ascendancy all blobs' uploading and downloading datas. Some systems are faster with event-based pipelining with afar reads ambuscade amid computes or afar writes, some systems are faster with driver-controlled version.

Number of blobs for the pipelining charge be a minimum of 4 and has to be assorted of 4 and is 4 by default. This bulk can be afflicted with abacus it afterwards activity type:

Sometimes alike pipelining is not abundant because of accidental copies amid C# arrays and C OpenCL buffers, again one can acclimatize a acreage of arrangement adhesive to accomplish it zero-copy access:

this anon creates a C arrangement inside, copies ethics of C# arrangement to this C arrangement and uses it for all compute methods it calls. If it will be acclimated added than once, it will abatement GPU to Host admission timings greatly. If developer needs to alpha with C rightaway,

this creates and uses C arrays by default. There is additionally ClFloatArray that can be anesthetized to this as initialization. API has these types for use arrays: float, double,int,uint,char,byte,long.

[caption id="" align="aligncenter" width="400px"] 63 best Math images on Pinterest | Teaching math, Teaching ideas ... | multiplication x4

63 best Math images on Pinterest | Teaching math, Teaching ideas ... | multiplication x4[/caption]

ClNumberCruncher chic automatically handles CPUs and iGPUs and any added RAM-sharing accessories as a streaming-processor to accretion advantage of zero-copy read/writes aural kernel. This is advantageous abnormally with low compute-to-data scenarios as in this "adding PI" archetype in the beginning.

Streaming advantage is additionally enabled for all accessories by absence so accessories may not use committed memories.

When developer needs to attenuate this affection for detached GPUs, a constant needs to be accustomed bulk of false:

Developers can additionally accept accessories for GPGPU in a more explicit way:

the aerial archetype selects all Intel platforms, again selects all accessories in them that allotment arrangement RAM, bigger selecting CPU and its iGPU, bigger for alive data. There are a lot of altered methods to aces accessories depending on their specialities such as anamnesis size, cardinal of compute units and benchmarks(this in approaching versions).

When achievement is not satisfactory, absorber copies needs to be optimized carefully. Cekirdekler API by absence behavior, copies all arrays to all accessory buffers, computes, reads fractional after-effects aback from all devices. This duplicates some bare aforementioned abstracts to all devices. Back accessories are bare to apprehend alone their own allotment from array, a acreage needs to be set:

then, if device-1 computes P of array, again it reads alone P of the array, device-2 reads the blow of it. Again afterwards compute, both address after-effects on array.

There are several flags to acquaint the API about how buffers will be handled:

"read" instructs the API that arrangement will be apprehend as a whole(unless fractional is set) afore compute.

"write" instructs the API that arrangement will be accounting on it but partially by eah device, adverse of partialRead.

If there is alone distinct accessory and an arrangement needs to be computed abounding times afterwards artful to/from host, all three fields are bare to be set to false.

Another important allotment of absorber administration is, "array elements per workitem" value. The API interprets this bulk according for all workitems. For example, it there are 1024 workitems and anniversary workitem loads, computes, writes alone float4 blazon variables, it is developer's albatross to accept "4" for the elementsPerWorkItem bulk while application float arrangement on host side.

the aerial sample enables 4x cardinal of elements to be affected to devices. If a accessory runs 400 workitems, that accessory now gets 1600 float elements from array. Aboriginal workitem works on elements 0-3, additional workitem works on elements 4-7 and so on.

Getting some accessible advice from console(will be book in approaching versions) is additionally easy:

Selected platforms:

Selected devices:

Load aerialist distributing workitems at anniversary compute adjustment call:

You can acquisition added abundant advice on wiki folio of github repository.

Quote:

Important info: If absolute assignment is alkali or sand, then bulk aerialist is trading grains amid devices. Atom admeasurement is according to bounded workgroup admeasurement assorted by activity blobs. If activity is disabled, again atom admeasurement is aloof bounded workgroup size. If grainsize is actual baby compared to all-around size, again it bulk acclimation becomes bigger grained.

When there are M cardinal of GPUs in system, all-around admeasurement charge be a minimum of M * Atom size.

Each accessory has to accept a minimum of 1 grain(for example,256 threads). Accessories can't absolutely advertise all grains.

Increasing pipelining increases atom admeasurement so makes it harder to bulk balance.

Similar for buffers, now one needs to accumulate in mind: multiply aggregate with "numberOfElementsPerWorkItem" of arrangement to apperceive how abundant abstracts it copies.

Example: 2k workitems aggregate to 2 devices, 1.5k and 512. If pipelining is activated with 4 blobs and workgroup admeasurement is 128, again these two accessories can barter alone 512 workitems and accept minimum of 512 workitems. Actual bad archetype of bulk balancing, grains are not fine. Now bold atom uses float16 but host ancillary is accustomed a byte-array(such as it came from TCP-IP directly), again developer needs to set "number OfElementsPerWorkItem" bulk of byte arrangement to 16*4 because anniversary float is 4 bytes and anniversary workitem is application 16-floats structs.

Edit:

Kernels can be again or altered kernels can be run consecutively by names, anniversary afar by amplitude or breach or semicolon or newline burn "n" or bare char. (these repeats don't change bulk partitioning, profiles as one operation)(seems advantageous for alone distinct accessory usage)

Edit-2: Archetype of O(N²) algorithm with aerial abstracts re-use arrangement but low compute-to-data ratio

Code:

This opencl atom does 4096*4*(1 accession 1 accession 1 multiplication) per workitem per compute. Host ancillary executes 4096 workitems so anniversary compute adjustment is accomplishing 201M amphibian point operations and 537MB RAM access. Back bulk aerialist converges, iGPU completes best of the assignment as quick as 5ms which agency 40.2 GFLOPs (5 of max abstract value) because compute-to-data arrangement is low in the centermost loop. CPU cannot get alike afterpiece because CPU is additionally confined as scheduler for opencl devices.

iGPU has 12 compute units = 96 shaders

[caption id="" align="aligncenter" width="400px"] Halloween Math Worksheet -- All Operations -- Multiplication Facts ... | multiplication x4

Halloween Math Worksheet -- All Operations -- Multiplication Facts ... | multiplication x4[/caption]

CPU has 2 cores but 1 amount is called = 4 accession argumentation units

Output:

now aforementioned affairs with an FX8150 R7-240(much stronger) system:

a stronger GPU beats a stronger CPU. This is apparently acquired by CPU accomplishing not accepting abundant registers for all threads, additionally accepting beneath accoutrement in-flight and accepting slower memory(also aforementioned anamnesis acclimated for API absorber copies).

Example for alive abstracts with aforementioned host codes but altered atom and 4M workitems with 16M arrangement elements:

even a distinct CPU-core has commensurable alive achievement to its iGPU

Output:

Changelog(v1.1.5):

when atom ambit adapt altered typed host arrays, aspect alignment altitude charge be met

No charge to put -1 to both number-of-cores and number-of-gpus ambit in ClNumberCruncher constructor when allotment accessories explicitly(these ambit are alone abide for absolute accessory alternative now)

Changelog(v1.1.6)

Changelog(v1.1.9)

in the aerial example, vertices is an arrangement of Vector3(this is from a alive Unity example).

This automatically sets the "numberOfElementsPerWorkItem" acreage appropriately with the bytes per struct so no charge to set it but can get it to see.

Beware! OpenCL treats float3(kernel-side struct) abnormally for anniversary vendor. So use Vector3 and agnate 3D elements as authentic floats and accumulate the indexer by 3 and add 1 for y, 2 for z (x is already zero).

Changelog(v1.2.0)

Here is a gif assuming how the activity works:

Example of architecture a pipeline:

Single date to compute x 1:

single date to compute x*5:

binding two stages calm and creating the pipeline:

pushing abstracts to pipeline, accepting aftereffect to an array:

now it computes (x 1)*5 for anniversary aspect of arrangement and uses two gpus concurrently, one per date and affective abstracts at the aforementioned time with advice of bifold buffering.

Changelog(v1.2.1)

Changelog(v1.2.2)

Now accessory to accessory activity stages can be initialized in buildPipeline() adjustment automatically with the ambit accustomed in this method:

Changelog(v1.2.3)

Multiple atom names in "device to accessory activity stages" can be aggregate with "@" separator instead of " ",",",";" separators so they apprehend inputs from host alone already afore aboriginal atom and address achievement to host alone already afterwards aftermost kernel. Afterwards "@", anniversary atom reads and writes inputs and outputs, authoritative "multiple atom stage" slower.

"@" afar kernels run as a distinct atom so all use distinct global-local ambit value.

"a@b@c" N : 256 1 apprehend 1 write

[caption id="" align="aligncenter" width="400px"][/caption]

"a b@c" N,M : 256,128 2 reads 2 writes

Changelog(v1.2.4)

Changelog(v1.2.5)

Changelog(v1.2.6)

Added kernel(s) echo affection to cardinal cruncher. Reduces API aerial accession over hundreds of kernels.

Compatible with CekirdeklerCPP v1.2.6

Changelog(v1.2.7)

Added enqueueMode banderole for numberCruncher and device-to-device activity date classes so they can do bags of operations with aloof distinct synchronization amid host and accessory (up to 60x faster for ablaze workloads) This works alone for distinct GPU non-driver-pipelined non-event-pipelined compute() operations. But accessible in accessory to accessory pipeline. Now its accessible to enqueue altered all-around ranges and bounded ranges per atom afterwards falling aback to "device-to-device" or "repeat" appearance and ablaze workloads accretion 60x achievement (such as agent accession with only 1024 threads).

Changelog(v1.2.8)

unnecessary clSetKernelArg issues are reduced

added ClArray.writeAll to get aftereffect arrays as a accomplished instead of aloof a cardinal of elements. Agnate to non-partial reads by ClArray.read = accurate and ClArray.partialRead=false. If assorted GPUs are used, anniversary GPU writes alone 1 of aftereffect arrays(instead of autograph aforementioned array, amorphous behavior).

a C# burn arrangement bug fixed(for not accepting accurate arrow to its abstracts back anesthetized to C )

Enqueue approach achievement concern bug fixed(was not giving exact timing) now its queryable by clNumberCruncher.lastComputePerformanceReport()

Changelog(v1.2.9)

For example, this atom assets achievement by ambience readOnly for aboriginal constant and writeOnly for additional parameter

Changelog(v1.2.10)

Changelog(v1.2.11 and v1.2.12)

Matrix multiplication activity example:

Image processing(blur, rotate, resize, blend) activity example:

Changelog(v1.3.2)

Changelog v1.3.3 - v1.4.1

Smoothed k-means absorption archetype video, application OpenCL 2.0 activating accompaniment feature:

Comparison of performance of "batch computing" affection against "pipelining" and "load balancing":

Grainsize revisited:

Note: there are some classes that accept "cluster" in their names, those are in prealpha date and works unoptimized way and not translated to english(yet). The all-around account constant was actuality acclimated by those classes.

Note2: cardinal cruncher article allocates 16 queues, which may not be adapted for some accessories and may accord CL_OUT_OF_RESOURCES or CL_OUT_OF_HOST_MEMORY alike if RAM is not full. Works for AMD and INTEL, didn't try with NVIDIA. I didn't alike get abutting to any FPGA, I'd like to. I heard their opencl abridge times are hours!

Note3: ClNumberCruncher doesn't analysis if compiler is available, so, ClNumberCruncher will be added that ascendancy argumentation in approaching so it will be added thread-safe. For now, body all ClNumberCruncher instances serially, with locks. Compute methods of altered ClNumberCruncher instances are additionally thread-safe but aforementioned instance can't be acclimated in altered accoutrement for compute method.

Note4: All devices, platforms, aggregate releases their C (unmanaged) altar aloft abolition so user may not charge to dispose() them ever(unless some bound anamnesis ascendancy is needed)

For latest version, amuse appointment github athenaeum and feel chargeless to add an "issue" if you accept a botheration accompanying to Cekirdekler API.

Thank you for your time.

If you accept accounting a complete image-resizer with this, you will accept instant-speedup whenever you put accession GPU into case, whether it is aforementioned bell-ringer or not, aforementioned bank or not. Alike overclocking one of the cards will accept absolute aftereffect on performance, if the angel is big abundant to accept a finer-grained bulk balancing.

[caption id="" align="aligncenter" width="400px"] 63 best Math images on Pinterest | Teaching math, Teaching ideas ... | multiplication x4

63 best Math images on Pinterest | Teaching math, Teaching ideas ... | multiplication x4[/caption]

Will accumulate abacus added actuality afterwards anniversary new affection added in github.

[caption id="" align="aligncenter" width="400px"]

Multiplication, Free printable worksheets and Printable worksheets ... | multiplication x4

Multiplication, Free printable worksheets and Printable worksheets ... | multiplication x4[/caption]

[caption id="" align="aligncenter" width="400px"]

Multiplication X0x1x2x3and X4 Paper To Print - Fill Online ... | multiplication x4

Multiplication X0x1x2x3and X4 Paper To Print - Fill Online ... | multiplication x4[/caption]

[caption id="" align="aligncenter" width="400px"]

Grade 3: Multiplication Tables and Fact Families: Overview | multiplication x4

Grade 3: Multiplication Tables and Fact Families: Overview | multiplication x4[/caption]

[caption id="" align="aligncenter" width="400px"]

[/caption]

[caption id="" align="aligncenter" width="400px"]

Index of /wp-content/uploads/2012/03 | multiplication x4

Index of /wp-content/uploads/2012/03 | multiplication x4[/caption]

[caption id="" align="aligncenter" width="400px"]

Best 25 Multiplication timed test ideas only on Pinterest ... | multiplication x4

Best 25 Multiplication timed test ideas only on Pinterest ... | multiplication x4[/caption]

[caption id="" align="aligncenter" width="400px"]

4 Times Table Song - "The Four Rap"- from "Multiplication Jukebox ... | multiplication x4

4 Times Table Song - "The Four Rap"- from "Multiplication Jukebox ... | multiplication x4[/caption]